Tokenomics Intelligence Infrastructure

The Tokenomics

Intelligence Layer

for Institutional Crypto

Token supply must be clear. Tokenomist unifies vesting, on-chain claims, and emission forecasts through 2036 in one platform, covering 80% of crypto market cap.

On/Off chain Source

Tracked Value

Crypto Experience

Users Served

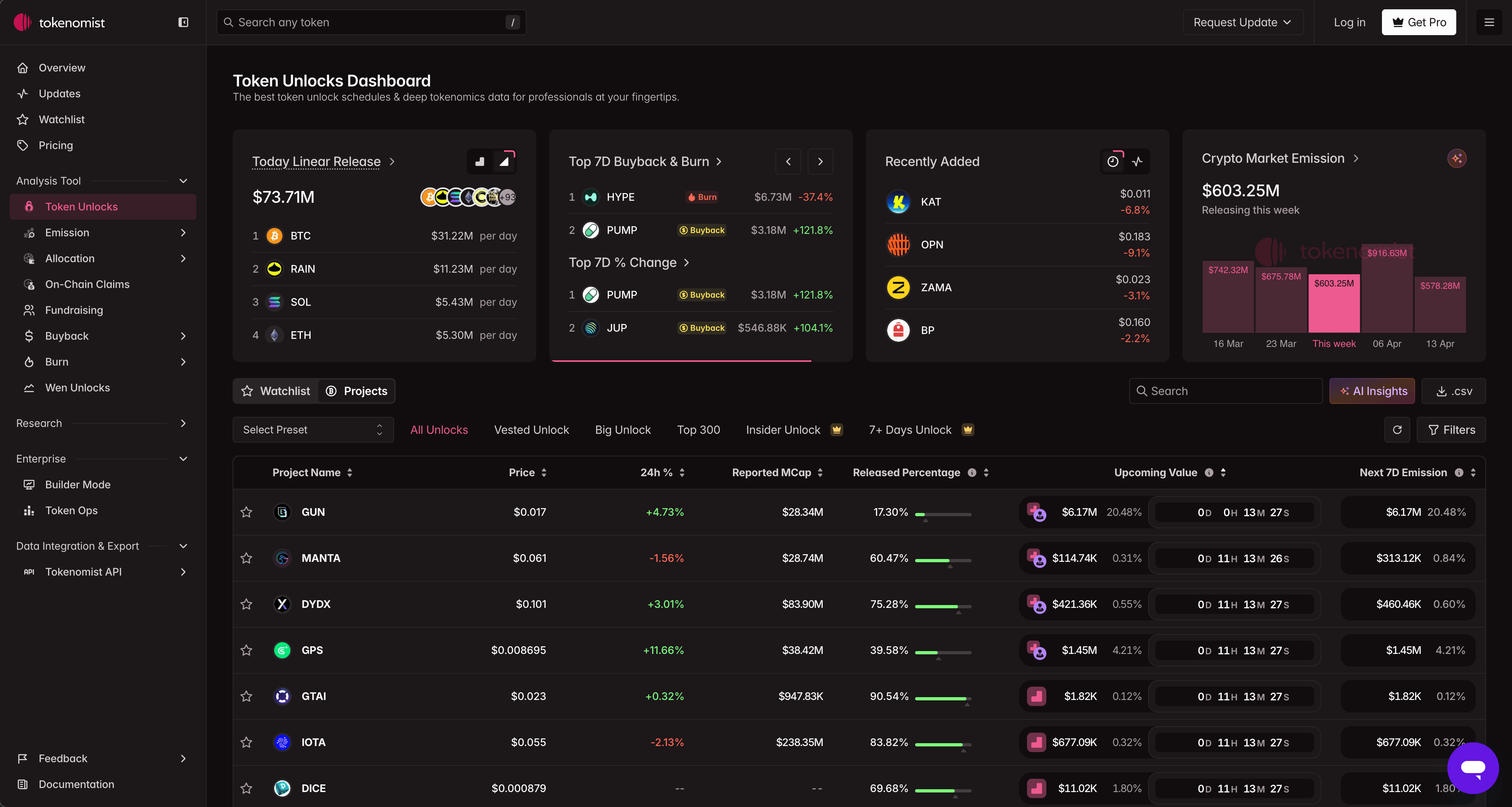

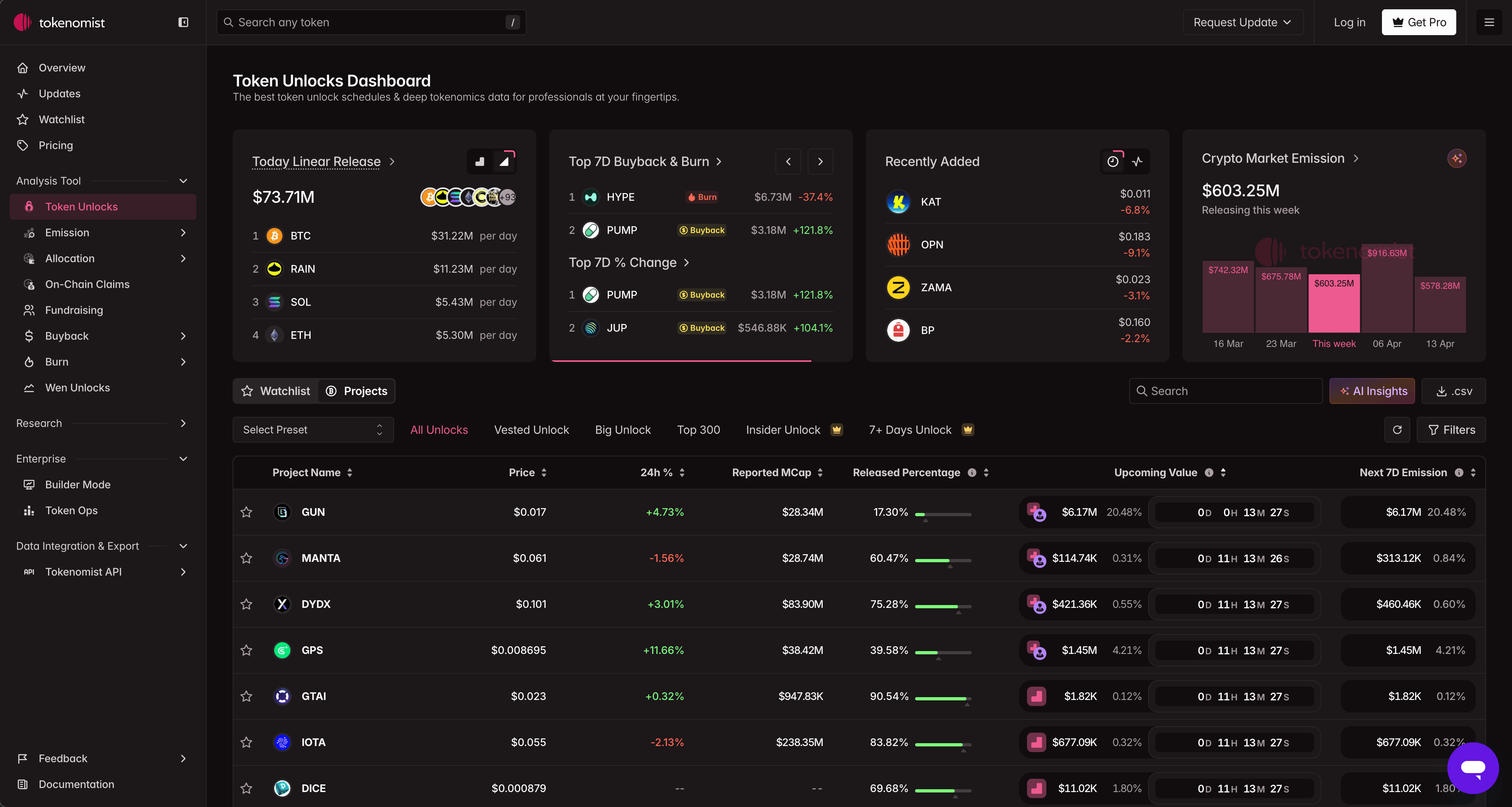

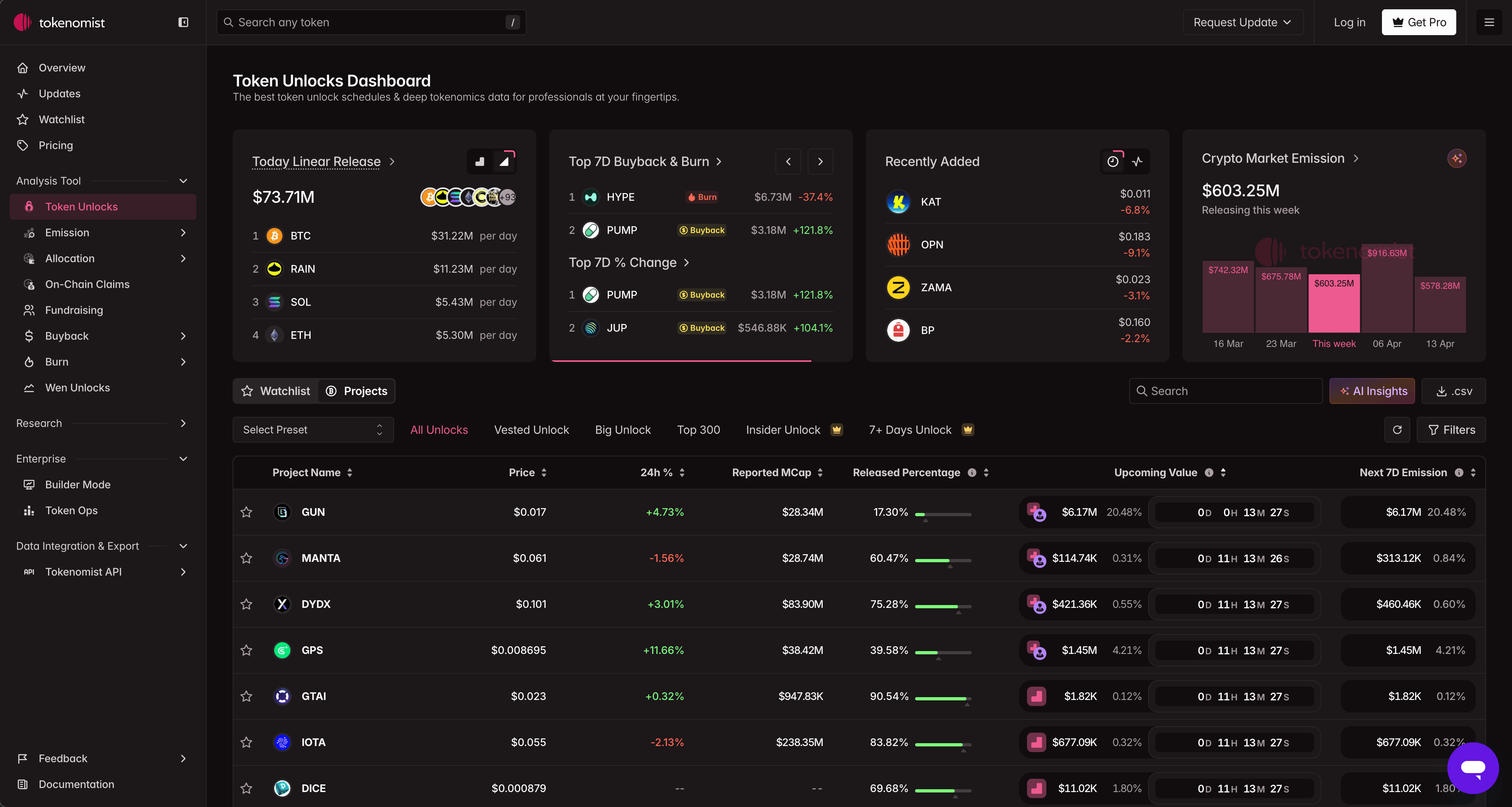

PRODUCT

Crafted by the Tokenomist team, iterating closely with our partners, to turn a year of crypto data into insights that matter for both institutional researchers and individual traders.

TOKENOMIST API

Built on Tokenomist API

Focal - FalconXBusiness Development

a GenAI platform purpose-built to help institutional investors make sharper, faster decisions.

Coingecko PartnershipFounder

Bringing tokenomics intelligence to the masses

Explore on Tokenomist.ai

RESEARCH

Tokenomist Annual Report

Created by the Tokenomist team with partners, turning a year of crypto data into key insights for researchers and traders.

REPORT

Annual Report

2022

REPORT

Annual Report

2023

REPORT

Annual Report

2024

REPORT

Annual Report

2025

TOKENOMIST RESEARCH BRIEF

Supply-side research. Every week.

Unlock schedules, vesting breakdowns, and emission tracking

aggregated from multiple sources and verified for accuracy. Published weekly.

Subscribe now

RESEARCH

Why

exists.

Tokenomics data is inconsistent, undisclosed, and rarely standardized. We fix that — with methodology, not just aggregation.

01

The data quality problem

Digital asset projects operate without standardized disclosure requirements. Vesting data originates from on-chain contracts, public announcements, private project confirmations, or on-chain inference. No single source is authoritative. No source is guaranteed accurate. Most data vendors do not disclose which applies.

02

Our methodology

Every data point we publish carries two explicit labels: Assumption — the source type — and Precision — the timing confidence level. A vesting-contract-verified event at second-level precision and an inferred on-chain estimate at monthly precision are both publishable. They are not equivalent, and we never represent them as such.

03

The mandate

Apply the data standards that institutional fixed-income and equity markets take for granted — to digital asset supply mechanics. Token supply schedules, allocation structures, and emission curves carry the same investment relevance as corporate debt covenants. They warrant the same rigor.

THE TEAM

Specialists in supply mechanics.

DAOSurv combines quantitative research, on-chain data engineering, and capital markets experience — focused exclusively on tokenomics infrastructure.

Incorporated in Singapore. Operating globally. Our analysts read the vesting contracts, verify on-chain events, and label every data point with its source and precision level before it reaches the platform.

About the team

Data and Research

Vesting contract analysis, on-chain verification, allocation modeling, and emission schedule standardization across 400+ tokens.

Engineering

Real-time data pipelines, API infrastructure, on-chain event monitoring, and platform development.

Analytics

Demand metrics, supply-pressure modeling, historical precedent datasets, and institutional reporting tools.

Operations

Product, customer success, and institutional partnerships — ensuring data accuracy reaches users reliably.

Tokenomics Intelligence Infrastructure

The Tokenomics

Intelligence Layer

for Institutional Crypto

Token supply should never be ambiguous. Tokenomist standardises vesting, on-chain claims, and long-term emission projections through 2036 into one authoritative platform, covering 80% of total crypto market cap.

On/Off chain Source

Tracked Value

Crypto Experience

Users Served

PRODUCT

Crafted by the Tokenomist team, iterating closely with our partners, to turn a year of crypto data into insights that matter for both institutional researchers and individual traders.

TOKENOMIST API

Built on Tokenomist API

Explore on Tokenomist.ai

Focal - FalconXBusiness Development

a GenAI platform purpose-built to help institutional investors make sharper, faster decisions.

Coingecko PartnershipFounder

Bringing tokenomics intelligence to the masses

RESEARCH

Tokenomist Annual Report

Crafted by the Tokenomist team, iterating closely with our partners, to turn a year of crypto data into insights that matter for both institutional researchers and individual traders.

REPORT

Annual Report

2022

REPORT

Annual Report

2023

REPORT

Annual Report

2024

REPORT

Annual Report

2025

TOKENOMIST RESEARCH BRIEF

Supply-side research. Every week.

Unlock schedules, vesting breakdowns, and emission tracking

aggregated from multiple sources and verified for accuracy. Published weekly.

Subscribe now

RESEARCH

Why

exists.

Tokenomics data is inconsistent, undisclosed, and rarely standardized. We fix that — with methodology, not just aggregation.

01

The data quality problem

Digital asset projects operate without standardized disclosure requirements. Vesting data originates from on-chain contracts, public announcements, private project confirmations, or on-chain inference. No single source is authoritative. No source is guaranteed accurate. Most data vendors do not disclose which applies.

02

Our methodology

Every data point we publish carries two explicit labels: Assumption — the source type — and Precision — the timing confidence level. A vesting-contract-verified event at second-level precision and an inferred on-chain estimate at monthly precision are both publishable. They are not equivalent, and we never represent them as such.

03

The mandate

Apply the data standards that institutional fixed-income and equity markets take for granted — to digital asset supply mechanics. Token supply schedules, allocation structures, and emission curves carry the same investment relevance as corporate debt covenants. They warrant the same rigor.

THE TEAM

Specialists in supply mechanics.

DAOSurv combines quantitative research, on-chain data engineering, and capital markets experience — focused exclusively on tokenomics infrastructure.

Incorporated in Singapore. Operating globally. Our analysts read the vesting contracts, verify on-chain events, and label every data point with its source and precision level before it reaches the platform.

About the team

Data and Research

Vesting contract analysis, on-chain verification, allocation modeling, and emission schedule standardization across 400+ tokens.

Engineering

Real-time data pipelines, API infrastructure, on-chain event monitoring, and platform development.

Analytics

Demand metrics, supply-pressure modeling, historical precedent datasets, and institutional reporting tools.

Operations

Product, customer success, and institutional partnerships — ensuring data accuracy reaches users reliably.

Tokenomics Intelligence Infrastructure

The Tokenomics

Intelligence Layer

for Institutional Crypto

Token supply should never be ambiguous. Tokenomist standardises vesting, on-chain claims, and long-term emission projections through 2036 into one authoritative platform, covering 80% of total crypto market cap.

On/Off chain Source

Tracked Value

Crypto Experience

Users Served

PRODUCT

Crafted by the Tokenomist team, iterating closely with our partners, to turn a year of crypto data into insights that matter for both institutional researchers and individual traders.

TOKENOMIST API

Built on Tokenomist API

Explore on Tokenomist.ai

Focal - FalconXBusiness Development

a GenAI platform purpose-built to help institutional investors make sharper, faster decisions.

Coingecko PartnershipFounder

Bringing tokenomics intelligence to the masses

REPORT

Tokenomist Annual Report

Crafted by the Tokenomist team, iterating closely with our partners, to turn a year of crypto data into insights that matter for both institutional researchers and individual traders.

REPORT

Annual Report

2022

REPORT

Annual Report

2023

REPORT

Annual Report

2024

REPORT

Annual Report

2025

TOKENOMIST RESEARCH BRIEF

Supply-side research. Every week.

Unlock schedules, vesting breakdowns, and emission tracking

aggregated from multiple sources and verified for accuracy. Published weekly.

Subscribe now

RESEARCH

Why

exists.

Tokenomics data is inconsistent, undisclosed, and rarely standardized. We fix that — with methodology, not just aggregation.

01

The data quality problem

Digital asset projects operate without standardized disclosure requirements. Vesting data originates from on-chain contracts, public announcements, private project confirmations, or on-chain inference. No single source is authoritative. No source is guaranteed accurate. Most data vendors do not disclose which applies.

02

Our methodology

Every data point we publish carries two explicit labels: Assumption — the source type — and Precision — the timing confidence level. A vesting-contract-verified event at second-level precision and an inferred on-chain estimate at monthly precision are both publishable. They are not equivalent, and we never represent them as such.

03

The mandate

Apply the data standards that institutional fixed-income and equity markets take for granted — to digital asset supply mechanics. Token supply schedules, allocation structures, and emission curves carry the same investment relevance as corporate debt covenants. They warrant the same rigor.

THE TEAM

Specialists in supply mechanics.

DAOSurv combines quantitative research, on-chain data engineering, and capital markets experience — focused exclusively on tokenomics infrastructure.

Incorporated in Singapore. Operating globally. Our analysts read the vesting contracts, verify on-chain events, and label every data point with its source and precision level before it reaches the platform.

About the team

Data and Research

Vesting contract analysis, on-chain verification, allocation modeling, and emission schedule standardization across 400+ tokens.

Engineering

Real-time data pipelines, API infrastructure, on-chain event monitoring, and platform development.

Analytics

Demand metrics, supply-pressure modeling, historical precedent datasets, and institutional reporting tools.

Operations

Product, customer success, and institutional partnerships — ensuring data accuracy reaches users reliably.